Chapter 3: Git for the Agentic Age

AgentSpek - A Beginner's Companion to the AI Frontier

This commit message tells a story that would have been impossible five years ago. A record of human-AI collaboration that produced something neither could have created alone. Git was built for human-paced development, but AI changes all of these assumptions.

Rich Hickey asked how we factor programs so that when we look at any given part, we can understand it. That question matters more now than when he asked it in 2011, because the volume of code we produce has increased by an order of magnitude and the source of that code has become ambiguous.

A New Kind of Commit

commit 7f8a9b2c1e4d6f9a2b5a8c1f4d7a0b3c6e9f2a5c

Author: j@joshuaayson.com

Co-authored-by: Claude <noreply@anthropic.com>

Date: Tue Oct 15 14:32:18 2024 -0700

Add content processing pipeline with graph database integration

Claude designed the Neo4j relationship schema and implemented

the core ETL logic. I refined the error handling and optimized

for our specific content structure.

Generated with Claude CodeThis commit message tells a story that would have been impossible five years ago. Not just code. A record of collaboration between a human and something that is not human. Neither could have produced this alone.

Git was built for human-paced development. Linus Torvalds created it in 2005 out of frustration with existing version control. “I’m an egotistical bastard, and I name all my projects after myself. First Linux, now git.” But beneath the humor was serious intent: a distributed system that could handle the Linux kernel’s development pace.

AI changes these assumptions. When your pair programming partner can generate a thousand lines of tested code in thirty seconds, when experimentation costs approach zero, when you can explore dozens of architectural approaches in the time it used to take to implement one, your version control strategy needs to evolve. The boundary between human and machine contributions is not just blurred. It is irrelevant. What matters is building software that works, with a history that tells the truth about how it came to be.

Semantic History

The fundamental unit of Git is the commit. What should a commit represent when AI can generate substantial changes in seconds?

Classic Git philosophy says commits should be logical units of change. One feature, one commit. Atomic, revertable, with messages that explain the why. Alan Perlis advocated in 1958 that every program should be a structured presentation of an algorithm. Version control extended this principle to the evolution of programs. But this model assumes human speeds and human cognitive limitations.

What used to take from 10 AM to 5 PM now happens between 10:00 and 10:25. You describe the feature to AI. Review the generated implementation. Refine edge cases. Verify tests. Done. The same logical unit of work, ten times faster.

Should this be one commit or five? That depends on what story you want your history to tell.

Organize around semantic intentions rather than time. Intent commits capture the human decision-making process: what authentication approach, how long sessions last, which features now versus later, where to store sensitive data. Implementation commits capture the technical execution, all co-authored, all honest about how the code came to be. Refinement commits capture the iterative process of review and improvement. Human review identified security gaps in the initial AI implementation. The collaboration continues.

This preserves both the human creative process and the technical details. It tells the truth about how modern software actually gets built.

Branches as Hypotheses

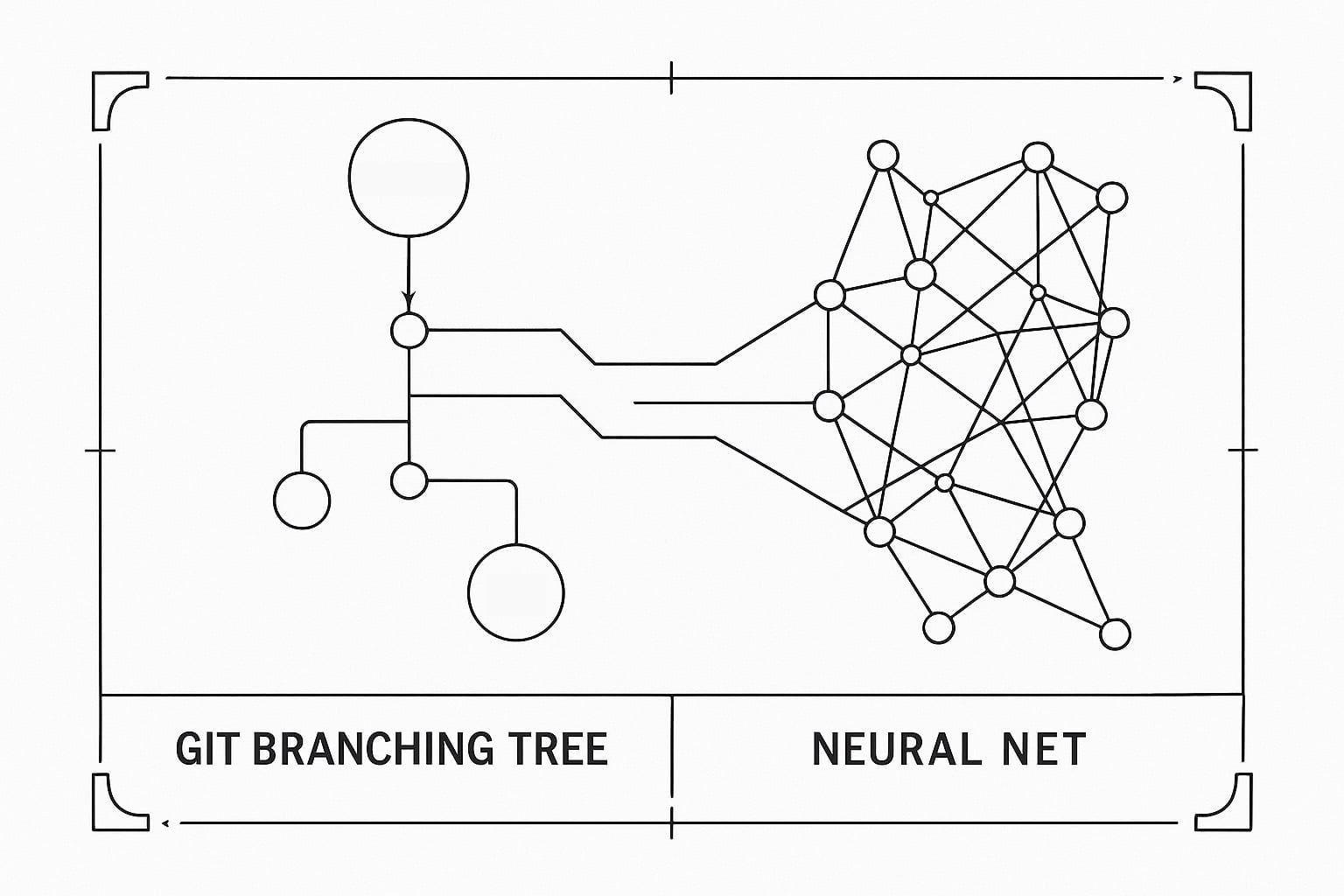

Traditional branching strategies assume branches represent significant effort and merging represents risk. AI changes both assumptions. Branching costs approach zero. The risk of experimentation becomes negligible.

When experimentation grows cheap, the number of viable approaches to any problem increases dramatically. You want a data processing pipeline? Create a branch for streaming, another for batch, a hybrid, maybe a serverless variant. Each fully implemented and tested in an hour or two. Instead of choosing upfront, try them all.

Branches become hypotheses, not commitments. Implement with AI assistance, evaluate, keep the learnings. Not necessarily the code. Maybe passkey implementation revealed UX and compatibility issues. Not suitable for the current user base, but promising for later. The branch remains for reference. That is valuable too.

Often the best solution combines insights from several experiments. Cherry-pick the streaming core from one branch, the batch processor from another, integration tests from a third. AI helps integrate the disparate pieces while human judgment selects which to combine. The final implementation comes from synthesis, not from any single exploration.

Keep a branch for AI collaboration artifacts. Document prompts that led to breakthroughs, capture architectural decisions and rationale, preserve failed experiments and what they taught you. This branch becomes a knowledge repository. It is where learning lives.

Honest Attribution

Who wrote this code? The question used to have a simple answer. Now it does not.

Code emerges from conversation. Implementations you directed but did not type. Refinements suggested by AI but selected by humans. We need conventions that tell the truth.

When AI generates the initial implementation, say so. When human review catches critical issues, document that. When the solution emerges from multiple rounds of collaboration, the commit message should reflect that reality. Start with the what, the standard description. Follow with the how, the collaboration process. AI provided initial algorithm design, human adjusted for performance constraints. Then the why, the human decisions and context. Chose this approach for better user experience, prioritized readability over micro-optimizations.

This might seem like a lot of detail for a commit message. But it helps future developers understand not just what changed but how the solution was discovered. It maintains honest attribution in a world where “authorship” is increasingly complex. And it creates a historical record of how this kind of collaboration evolves over time.

CI/CD Inverted

CI/CD pipelines were built for a world where code generation was the bottleneck. That world is gone. AI generates code faster than pipelines can test it.

The traditional pipeline assumes sequential validation. Commit, build, test, deploy. Each stage gates the next. This made sense when commits were precious and generation was slow. When you can generate ten valid implementations in the time it takes to run your test suite once, the pipeline becomes the bottleneck.

Invert the relationship. Test during exploration, not after. AI generates implementation with embedded tests, runs lightweight validation during generation, provides confidence scores, identifies areas needing human review. The pipeline becomes a collaborator, not a gatekeeper.

While one implementation runs through comprehensive tests, AI generates alternatives. While integration tests run, AI explores edge cases. The pipeline and generation process interleave, each informing the other. Tests become specification. Pipelines become feedback loops. Deployment becomes experimentation.

The Social Reality

How do teams react when half the commits are co-authored by AI? How do you review code that neither you nor your colleague fully wrote?

Some developers feel threatened when AI-generated code outperforms their handcrafted solutions. Others feel liberated from tedious implementation work. These tensions are real. Code review shifts fundamentally. You are not reviewing syntax. You are reviewing decisions. Why did you accept this suggestion but reject that one? What assumptions might not hold? What edge cases might both human and AI have missed?

Teams need new norms. When is AI generation appropriate? How much involvement requires disclosure? Who owns the bugs in AI-generated code? How do you maintain craftsmanship? These questions do not have universal answers, but every team needs to address them. The answer to trust is not blind faith or constant suspicion. It is thoughtful validation and gradual confidence building.

Patterns That Work Now

The Exploration Sprint: dedicate a two-hour block to AI-assisted exploration. Generate multiple implementations, document learnings, select the best approach or synthesis. This legitimizes experimentation and prevents it from becoming endless.

Human Touch Points: identify where human judgment is essential. Architecture decisions, UX choices, security review, performance trade-offs. AI assists everywhere. Humans make the calls that matter.

Living Documentation: treat docs as continuous, not eventual. AI generates initial documentation with the code, humans refine for clarity and context, updates happen in the same commits as code changes.

Honest History: commit to truthful attribution. Document AI involvement clearly, credit human decisions explicitly, preserve the collaborative nature of what is happening.

Where This Goes

Git was revolutionary because it distributed version control. Every developer has the full history. Every clone is complete. This democratized development in ways we are still discovering.

AI collaboration might be similarly revolutionary. Every developer has an intelligent partner. Every problem has multiple solutions. Every experiment is affordable.

If everyone can generate code, what distinguishes developers? If AI can implement anything, what is worth implementing? If experimentation is free, how do you decide when to stop exploring?

These are not problems to solve. They are tensions to navigate. The future of version control is about managing the collaboration between human creativity and machine capability. Preserving the story of how software comes to be, in an age where that story is increasingly complex.

Sources and Further Reading

The chapter’s opening quote from Rich Hickey’s “Simple Made Easy” (Strange Loop, 2011) about managing complexity is particularly relevant to version control in AI development. His insight that we can only consider a limited number of things at once drives the need for clear git workflows.

The historical perspective draws on Alan Perlis’s epigrams on programming (1982) and insights from the NATO Software Engineering Conference (1968), where version control challenges were first formally discussed at scale.

For those interested in the evolution of version control, the story of Git’s creation by Linus Torvalds (2005) provides context for understanding how distributed version control revolutionized software development, setting the stage for today’s AI collaboration patterns.

The ethical considerations around attribution echo themes from Weizenbaum’s “Computer Power and Human Reason” (1976), particularly his concerns about transparency in human-machine collaboration.

Next: Chapter 4: Agent Mode (The Way That Works)

← Previous: Chapter 2 | Back to AgentSpek

© 2025 Joshua Ayson. All rights reserved. Published by Organic Arts LLC.

This chapter is part of AgentSpek: A Beginner’s Companion to the AI Frontier. All content is protected by copyright. Unauthorized reproduction or distribution is prohibited.

Chapter 3 of 18 in Chapter 3: Git for the Agentic Age