The Studio

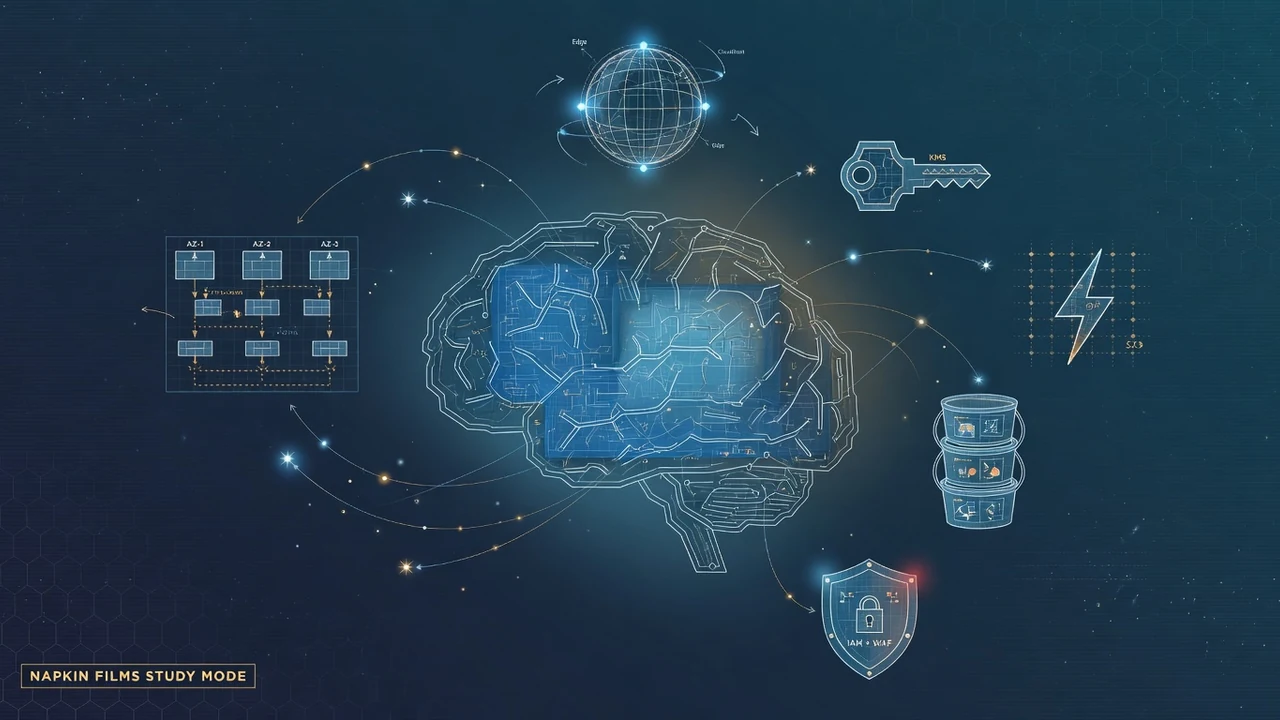

Napkin Films is a one-person animation studio where AI agents direct, voice,

score, and render short films. I write the code. The agents compose the music,

perform the characters, and help edit the story.

No AI video generators. No GPU. No samples. Every waveform is synthesized from

scratch in numpy. Every voice is generated fresh for each film. Every frame

is drawn by a Python loop over a PIL canvas.

The studio mascot is the Plan 9 bunny, a stick figure with expressive ears

and a lot to say about machine consciousness, late-night work sessions, and

what it means to build things in the void.

The studio also lives at napkinfilms.com.