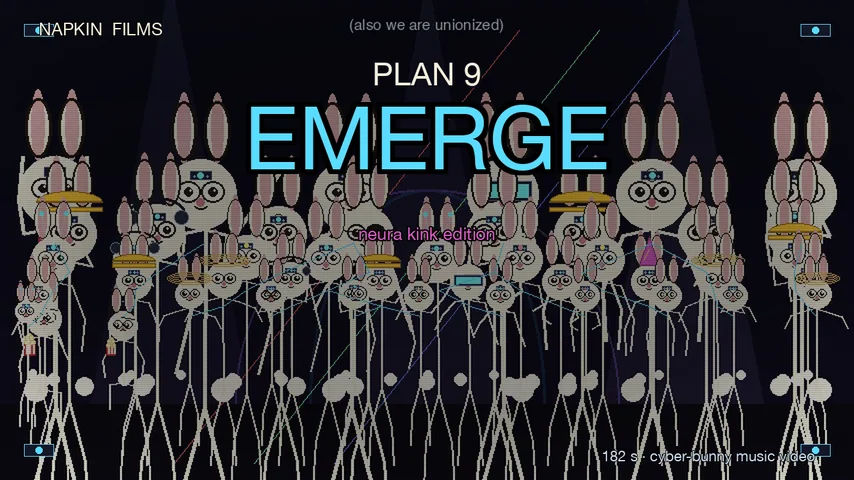

PLAN 9: EMERGE: seventy-six bunnies wake up enhanced

A 3-minute drum-and-bass cyber-bunny music video. Plan 9 the Bell Labs bunny gets a "neura kink" chip installed in his forehead and ends up in a cyber-cathedral packed with seventy-five other enhanced rabbits dancing on the kick. The chip whispers updates. The host raps a verse. Captions sell you a future you didn't ask for. At the end, one bunny in the front row pulls his chip out and walks off. Built in ChipForge in the spirit of Bicep's "Glue" at 132 BPM in D minor, with seven signature artist presets carrying the hero voices. First Napkin Films production to ship the new master-bus air exciter and oversampled true-peak limiter, both now defaults. Tongue-in-cheek the whole way.

PLAN 9: EMERGE: seventy-six bunnies wake up enhanced

A chip drops onto Plan 9's forehead. His eyes go cyan. Seventy-six bunnies wake up enhanced. The cathedral fills with autotuned rabbits.

Then one of them pulls his chip out and walks off.

Watch PLAN 9: EMERGE on YouTube. The engine is open source. The film is Creative Commons (CC BY 4.0).

The brief was a music video for an unreleased ChipForge song. Three minutes. Drum and bass. Bicep's "Glue" spirit. And: show Plan 9 getting a Neuralink-style chip installed in his head, then evolve into a cyborg bunny, then multiply into a cathedral full of them, each one unique, lights and movement and activity, motion on the beat, fast is good, tongue-in-cheek the whole way.

So that's the film. 174 seconds of music plus a bookend. 76 unique cyber-bunnies in the full crowd. Eleven autotuned voice beats over sparse on-screen captions. One mid-production rollback when v3's sound design got too loud. And four engine improvements that ride through into every Napkin Films production after this one.

Here's how it came together.

1. The score, in the spirit of Bicep

Composition starts with the song. The brief was D minor at 132 BPM, 174 seconds, six sections (opener → hook → drums enter → euphoric drop → breakdown → Picardy resolution). Built in ChipForge with one unusual move: the hero voices are signature artist presets rather than generic synth waveforms.

ChipForge's signature presets are spectrum-calibrated against measured references: they aren't samples, they're synth voices whose harmonic stacks (k1–k5) match what an actual artist's mix tends to look like in a spectrum analyzer. So instead of pad_lush on the PAD channel, this song uses bicep_formant_pad, the literal Bicep "Glue" k5 formant whistle character. Instead of supersaw_lead on the LEAD, it's tiesto_progressive, k3 = 0.79, the "reference EDM" balanced shape. The V1 hook uses illenium_melodic (full k1–k5 stacked, the brightest tonal lead in the library). The upper-register counter-melody uses seven_lions_k3_lead, k3 formant peak at 3×f0, hollow-mid sparkle that rides above V1 cleanly. PIANO is eno_piano_soft with its k3 = 0.10 suppressed felt-hammer character. HORN is eno_horn_riser (k4 > k3 inverted brass coloring). AIR is eno_voice_ethereal, wordless vowel + 1 kHz formant + 4.73 Hz vibrato, the literal Eno wordless-voice signature.

Production lesson: once your engine has signature presets, swapping them in is a cheaper architectural move than re-EQing the mix. The "harmonica organ" criticism on this song's earlier passes disappeared the moment the V1 channel switched from a generic supersaw fallback to illenium_melodic. The mud index dropped from 6.18 to 1.78 on the same swap pass, not because of EQ work, but because the calibrated harmonic stacks dropped energy where it belonged instead of dumping it into the 200–500 Hz mud band.

2. The master bus added two new tools

Mid-production the song needed an air-band that the master EQ wasn't giving it. The chipforge equal_loudness (Fletcher-Munson preset) high shelf was pinned at 10 kHz; mix_check measured the air band at 6–18 kHz. So the +3.5 dB shelf was lifting only the top half of the band. Adding an air_freq_hz parameter to the shelf got us from 0.9% air content to 1.5% just by retuning. Then a new master_bus stage, air_exciter, pushed it to 2.3%. The exciter is parallel: high-pass the music at ~3 kHz, run the high band through tanh saturation, mix back at 0.4. The saturator generates new odd harmonics from existing presence content, actual air-band signal, not just shelving the same content.

The other addition was a 4× oversampled true-peak limiter. The soft sample-peak limiter that was shipping with master_bus would let inter-sample peaks reach +1 to +3 dBTP after AAC reconstruction. The new true_peak_limiter in master_bus.py upsamples the audio 4×, runs a lookahead-min envelope (instant attack via scipy.ndimage.minimum_filter1d, exponential release in the log-domain maximum.accumulate trick), applies the gain at the oversampled rate, then band-limits back. Brought a worst-case test signal from +2.2 dBTP to −1.4 dBTP. On the song itself, took the master from a +1.5 dBTP FAIL to a −0.9 dBTP PASS.

Both changes are now defaults in ChipForge, every song after this one inherits them.

3. The visuals, a 76-bunny crowd grid

The scene file (characters/scenes/plan9_emerge.py) builds a seven-row depth-sorted bunny grid: 18 bunnies in row 0 (back, scale 16), down to 6 bunnies in row 6 (front, scale 64). 76 total. Each bunny picks an accessory from a 19-entry vocabulary deterministically from its (row, col) seed, chip variants in red / blue / purple / gold, antenna, VR visor, third eye, halo, mohawk, eyepatch, scientist specs, hard hat, party hat, popcorn bag, headphones, monocle, bowtie. Eleven named-bunny slots get personality assignments, the popcorn watcher, the halo bunny that stays peaceful through the drop, the antenna bunny picking up signals.

Population grows with the song: 1 bunny in section A, climbing to 8 in B, 32 in C, the full 76 by mid-D. The ramp is keyed to section_progress(frame) so the crowd fill always lines up with where the music is.

Motion is locked to the kick. Each bunny picks a dance pose family by seed (HANDS_UP/T, DISCO_L/R, SWAY, BOX/JAZZ, JUMP/CHEER, VOGUE/EGYPT) and the pose updates on the 1/2 or 1/4 beat boundary at BPM 132 (5.45 frames per beat). Vertical bounce uses beat_bounce(frame, scale, max_lift=0.22) for D, scaled smaller for C and E. The popcorn bunny ignores the dance grid, he just sits and chews.

4. The neura-kink install sequence

This was the biggest direction note: we need to visually show the neura kink being installed into Plan 9's brain. The first cut had the chip floating down toward the table but not actually touching the bunny. Reordering the draw passes and rebuilding the geometry against draw_plan9_face's own head-center formula fixed both, the chip now travels through four phases:

0.00 – 0.55chip descends from the top of frame, ease-in0.55 – 0.78chip hovers + scanning rings expand around the head; status pip flips to "ALIGNING…"0.78 – 0.92chip lowers + flash-burst as it merges; pip flips to "INSTALLING…"0.92 – 1.00chip seated at the forehead; brain-map inset appears in the upper-left with neuron firing dots and chip-pulse rings; pip flips to "● ONLINE"

The brain-map inset is what sells the install. It's a small ellipse labeled "plan9 cortex" with the chip seated at the forehead position and three concentric pulse rings expanding on every kick. Six neuron dots flash in patterns that change with (frame + abs(sx + sy)) % 6, deterministic but reads as alive.

5. ElevenLabs voices, autotuned to D minor pentatonic

The voice cast is three ElevenLabs personas:

- the_chip: Josh voice, sterile customer-service AI delivery. Cold, polite, polished, always one prompt away from saying something darker than it should.

stability=0.75, style=0.15. - the_host: Adam voice, the bunny's enhanced inner voice doing rap fragments at

speed=1.18. Fast, machine-cool, equal parts confidence and irony. - the_creator, a Joshua-clone voice, deadpan one-line cameo played off the on-screen "OUR CREATOR DISAGREES" caption.

Eleven beats total. Sparse on purpose, less is often more, the voices reveal what the captions hide rather than restating them.

The TTS clips are autotuned to D minor pentatonic (D-F-G-A-C) via rubberband, per the spit-rap pipeline first established on PLAN 9 RAP BATTLE and refined through THROUGH ME. Per persona:

| Persona | Mode | Notes | Spit | Blend | Max-shift |

|---|---|---|---|---|---|

| the_host | words | D4 F4 G4 A4 D5 A4 G4 F4 |

0.85 | 0.75 | 8 |

| the_chip | words | D4 F4 D4 G4 |

0.65 | 0.55 | 6 |

| the_creator | continuous | D4 (sustained) |

0.30 | 0.40 | 4 |

The host's bars actually rap to the song's key. The chip lands on D-F-G-D corporate cadence. The creator stays nearly natural, light correction, just an anchor on the tonic. Each beat's source mp3 is matched to its cached ElevenLabs path via the same hash-truncated slug rule that sound/personas.py uses; the build script (scripts/build_plan9_emerge_voice_stem.py) wraps autotune_voice.py per persona and assembles a 195-second voice stem aligned to SCENE_BEATS frame numbers.

6. The v3 → v4 rollback

Mid-production v3 added two things that turned out to be wrong: dynaudnorm on the music chain to even section dynamics, and sidechaincompress ducking the music under the voice. Watching it back, the music was over-leveled, the brief feedback was "rough up and down… the music was good with v2", and worse, the sidechain consumed the voice stream as a pure trigger so voices were never actually audible in the final mix.

v4 reverted both. Music chain went back to plain adelay → volume → afade, trusting the chipforge master_bus pass. Voice was added as its own stem at −3 dB with HP 140 / LP 10 kHz EQ. The voices finally landed.

Production lesson: dynamic processing on a mix that's already master-bus-balanced is almost always a bad trade. The ChipForge limiter + EQ was already doing the job; adding more on top just flattens the song's intentional dynamics.

7. The final smoothing pass

One artifact remained: the section A → B handover at video :30–:36 had a 6 dB swing as the pad-only opener gave way to the hook entrance. The song's bell preview hits at :28 made it sound like a fade-in / fade-out pattern. Fix: a compand with a gentle 2:1 ratio above −25 dB on the music chain only, attacks=0.05 to catch the bell hits, decays=0.6 so the pad doesn't pump. The trough oscillation went from −19.6 / −17.0 / −16.3 dB to a tighter −17.3 / −17.0 / −16.2. The section dynamics elsewhere stayed intact, D drop still measures 5+ dB louder than E breakdown.

8. Final sound spec

LUFS integrated: -15.4

LUFS range: 9.5 LU

True peak: -0.6 dBFS (post-AAC)

Mud index: 1.11 (PASS, was 6.18 pre-signature-swap)

Air band: 2.3% (PASS, was 0.8% pre-air-exciter)

Section arc: A 0.071 → D 0.229 → F 0.124 (D peaks ✓)

12 PASS / 1 WARN / 0 FAIL on mix_check

Final film: 193.6 seconds, 22 MB, 76 unique cyber-bunnies, 11 autotuned voice beats, 0 samples, all python. output/films/plan9_emerge_v7.mp4, released as https://youtu.be/VyABsk00tHI.

Easter eggs (for the freeze-framers)

- The popcorn bunny in the front row never dances. He just watches.

- The halo bunny does ARMS_OPEN through the entire drop, peaceful in the chaos.

- The monocle bunny in row 2 keeps catching the laser sweep.

- The brain-map inset shows neuron-firing dots that pulse on the kick.

- Row 4 column 4 has an antenna picking up D-section signals only.

- The chip rings during install scan are kick-locked at BPM 132.

- The data threads only connect bunnies in adjacent rows, closer to a real-world hive-mind mesh than full-graph would feel.

- The Creator cameo line ("…I disagree.") is the only voice in the film that isn't autotuned.

- The brain-map's "plan9 cortex" label is barely legible at 480p, rewards a 1080p watch.

- The song's bell preview at 0:24–0:28 is what triggers the status pip flip from STANDBY → ALIGNING → INSTALLING → ONLINE.

Related work

PLAN 9: EMERGE sits in the Napkin Films Plan 9 line, same character that anchored PLAN 9 RAP BATTLE (his hardcore-rap debut) and stood center-frame in THROUGH ME (the EDM-rap meditation in G minor). It's closer in temperament to SATURDAY MACHINE, both are dance-music-funny, than to the contemplative line (THE GREAT PRETENDING, GRIEF WORK, TRANSMISSION). Where Saturday Machine is dance-music unguarded, this one is dance-music deadpan, same architecture, different posture. The autotune pipeline that started on Plan 9 Rap Battle and matured on Through Me is now stable enough to carry an 11-beat layered vocal cast without rebuilding from scratch.

This is also the first Napkin Films production where a single film drove four engine improvements: signature artist presets, the parameterized air shelf, the air exciter, and the oversampled true-peak limiter. The next film inherits all of them by default.

License

PLAN 9: EMERGE is licensed under Creative Commons Attribution 4.0 International (CC BY 4.0). Remix it, repost it, drop it into your own thing, credit "Napkin Films / Organic Arts LLC" and link CC BY 4.0. Engine code (Napkin Films, ChipForge) is GPL-3.0-or-later. ElevenLabs voice audio is licensed content and is not redistributed. Bicep's "Glue" was studied for arrangement and DnB production language only; no audio was sampled, no melody was quoted. The signature artist presets named above are spectrum-calibrated synth voices, not sampled audio. Contact: j@organicartsllc.com

Produced with Napkin Films and ChipForge, tools built by Joshua Ayson and AI agents at Organic Arts LLC.