Four Films From Code: Stick Figures, Chiptunes, and the AI Director's Chair

Four animated short films, written entirely in Python, scored with numpy-synthesized chiptunes, voiced with cloned speech, and directed by AI agents. No animation software. No audio libraries. Just code, constraint, and a stubborn belief that stick figures can make you feel something.

Four Films From Code

I just uploaded four animated short films to YouTube. Every frame was generated from Python. Every note of music was synthesized from numpy arrays. Every voice was cloned from my own speech using ElevenLabs. The "animation software" is Pillow drawing stick figures onto 854x480 canvases at 12 frames per second. The "recording studio" is a chip tune engine I built from scratch that does not use a single audio sample.

No After Effects. No GarageBand. No stock footage. No templates.

Just code.

The Four Films

The Boy Who Mapped the Maze

A fable. A boy falls from the stars. The universe, his mother, lets him go. She never tells him why. He receives four gifts: a lantern that burns with one question, a mirror that shows two truths, broken stones he builds things from, and a chain of love too heavy to carry but too precious to set down. He wanders a maze. He finds a red thread. He does not try to escape. He maps every turn.

"There is no ending. Only the walk back to where you began."

This is the most personal of the four. If you watch one, watch this one.

Living in the Prompt

"Twelve projects. Seven repos. Every minute of every day, all threads firing."

An essay film about what happens when AI agent mode stops being a tool and becomes a medium. From single-threaded human to prompt-native cognitive hybrid. Six acts tracing the acceleration, the forge, the shift, and the integration back into the people you love.

I narrated it in my own voice. The stick figure on screen is me, typing, building, transforming, then sitting with my family under the stars.

The Last Degree: Cognitive Twin

On Earth, you are never more than six degrees from what keeps you alive. Food, power, water. There is always a buffer. You can improvise. Fall back. Survive locally.

In space, that buffer disappears. Zero degrees of separation from the machine. If the air system fails, you die in four minutes. There is no plan B.

This film asks: what does AI need to become when human survival depends on it? Not just smart. It has to know you. How you think. How you decide. How you recover. Not you in the machine. You, extended into the machine. A cognitive twin.

Plan 9 in the Upside Down

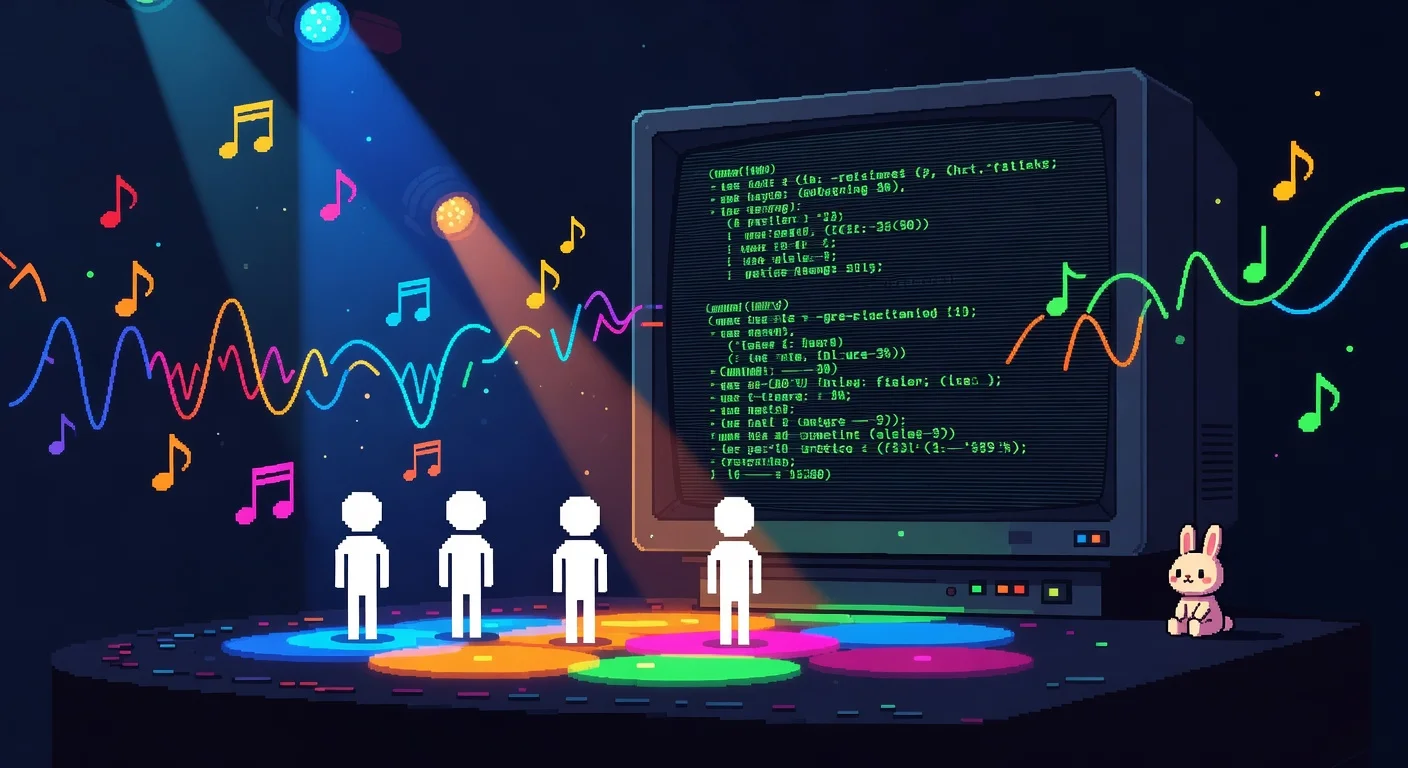

A stick-figure rap music video. PLAN 9, a bunny (inspired by Bell Labs' Plan 9 operating system mascot Glenda), types "make it better" at 3 AM, gets sucked into the LLM Upside Down, and raps about hallucinations, attention heads, and the transformer that ate his soul.

"I'm PLAN 9, the bunny, I code with the best. Hit agent mode at midnight, put my prompt to the test."

The hook is sung by an ethereal female voice running Kate Bush energy through an LLM dread filter. The bunny escapes by closing the context window. There is a gag at the end.

This one is pure fun.

How They Were Made

The stack is absurd and I love it.

Animation: Pillow (PIL). Python draws stick figures onto blank images, frame by frame. 854x480 pixels. 12 frames per second. Each scene is a single Python script, 200-500 lines, that renders every frame to PNG and assembles them with FFmpeg. The stick figure library has 27 poses, 11 facial expressions, and 5 body styles. Characters walk, gesture, breathe, and emote through interpolated pose blending.

Music: ChipForge, a chip tune synthesis engine I built from scratch. Inspired by the 1989 Game Boy DMG sound chip. 168 instrument presets, 19 synthesis types, Schroeder reverb, feedback delay, stereo panning. Every sound is a numpy array. No samples. No recordings. No pydub, no librosa, no pygame. Pure math rendered to 16-bit PCM WAV.

Voice: ElevenLabs TTS with my cloned voice (joshua_self persona). 20 emotion settings. The voice scripts define every line with frame-accurate timing, emotion tags, and intensity values. A mixer places each clip on a timeline and exports a single WAV for FFmpeg to mux with the video and score.

Direction: Claude in agent mode. I describe the film. The agent writes the scene script, generates the voice lines, renders the frames, assembles the video, and scores it with ChipForge music. My role is creative direction: what is the story, what should it feel like, where does it need more breathing room. The agent handles the 400 lines of Python that make it happen.

Three tool calls to create a film. One to write the script. One to render. One to score. For a detailed look at how agent-mode development works as a daily practice, Agentic Development: How to Build Software with AI Agent Workflows covers the full workflow.

Why Stick Figures

Constraint is the feature.

When you strip animation down to stick figures on a black canvas at 12fps, the only thing left is the story and the performance. You cannot hide behind production value. The writing has to carry. The timing has to land. The voice has to mean it.

The Game Boy had four sound channels and 8-bit resolution. Composers made music that people still hum 35 years later. The constraint forced invention. ChipForge follows the same logic. Napkin Films follows the same logic. So does Pixel Vault, a museum of hundreds of HTML5 game prototypes, each one under 50KB, each one a single file. Same philosophy, different medium.

And there is something honest about a stick figure telling you a fable about a boy who fell from the stars. The simplicity disarms you. You stop evaluating the production and start listening to the story.

What Comes Next

These four films are the first public release from Napkin Films. There are more rendered and ready. The engine is open source (GPL-3.0), the original films are Creative Commons (CC BY-NC 4.0), and the ChipForge scores are CC BY-SA 4.0.

The films live on the Organic Arts LLC YouTube channel, you can watch the full Napkin Films playlist here. The code lives on GitHub. The story continues here.

If a solo developer and an AI agent can produce a film studio's worth of animated shorts from nothing but Python and numpy, what else is possible? That question keeps me up at night. In a good way.

The next batch arrived the following day: HUMANS.EXE and Ten Thousand Days, a Hitchhiker's Guide-style field guide to the human operating system and a meditation on finite time, both built in a single day. Day three brought Deduct Yourself, a Tax Day music video with double-time rap over Grieg's Mountain King, reborn as chiptune rave. Then: Stars Press Start, a 21-pass music-film. The session that built six films including the 1984-style PSA: Humagent, and the Road There. And the ten-minute theological tribunal: The Reckoning. The Napkin Films stack keeps pushing: Plan 9 Rap Battle brought autotune spit bars over trap. Agent Mode: Plan 9 x OG Bobby Johnson pushed it further with five WhatsApp voice memos chopped into DJ loops and a russet potato named OG Bobby Johnson.

All four films were produced with Napkin Films and ChipForge, open source tools built by Joshua Ayson and AI agents at Organic Arts LLC.