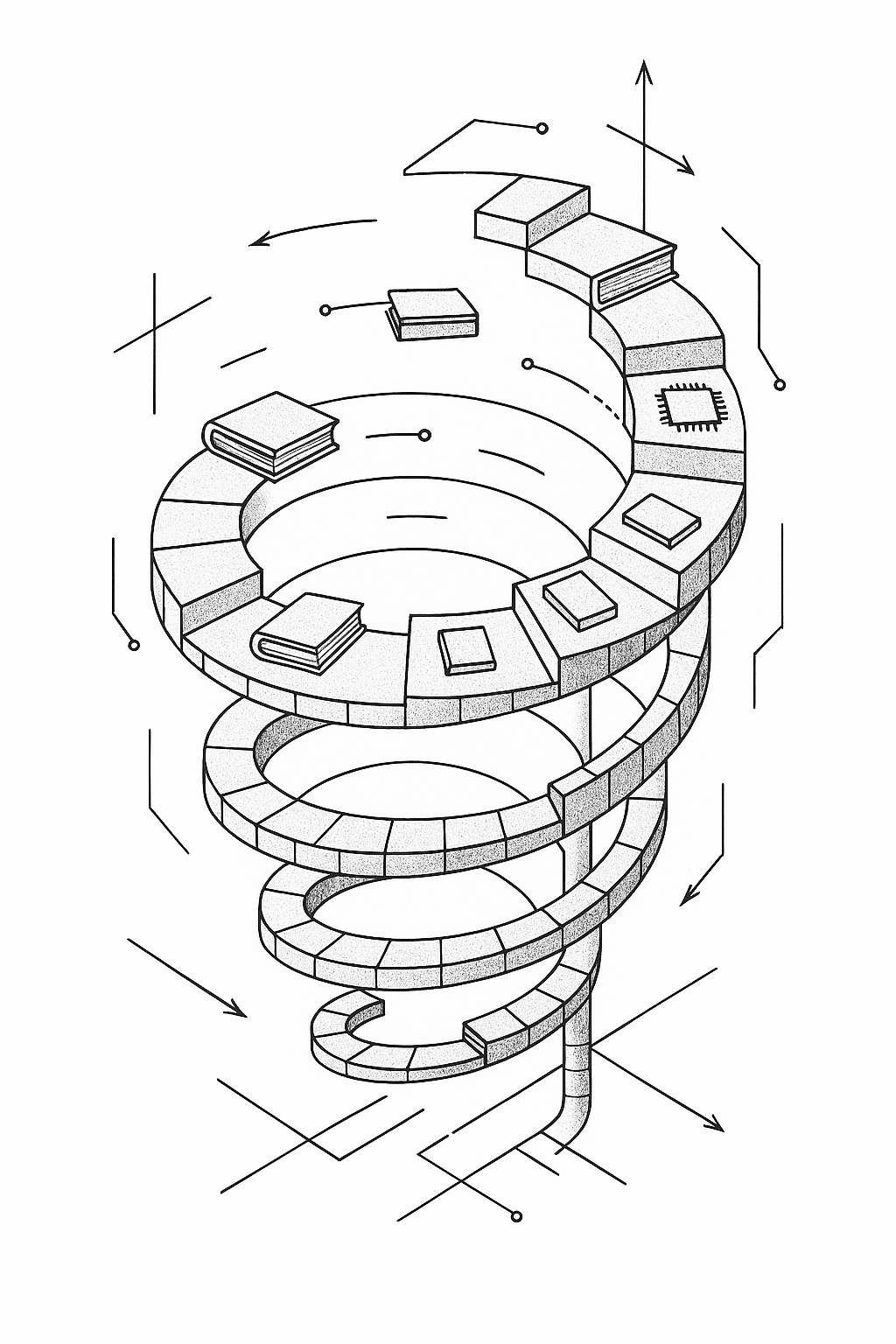

Chapter 12: The Knowledge Spiral

AgentSpek - A Beginner's Companion to the AI Frontier

Working with AI doesn't just teach you new things. It reveals how much of what you 'know' is shallow, contextual, or simply wrong. And it happens at a pace that's psychologically disorienting.

Perlis said a new tool does not solve all problems, it merely frees us to concentrate on other ones. The other ones turn out to be harder. More interesting. More human.

Wrong About Everything

You think you know something. Then you ask AI for help, and it casually corrects an assumption you have held for years. Your first instinct: no, the AI is wrong. You check the documentation. And then you realize you have been working with an outdated mental model, one that was “good enough” in practice but technically incorrect.

This keeps happening. Not occasionally. Constantly. The framework you thought you understood has edge cases you never encountered. The pattern you thought was optimal has better alternatives you never learned about. The architecture you thought was standard practice has evolved while you kept building with the old version.

Working with AI does not just teach you new things. It reveals how much of what you “know” is shallow, contextual, or simply wrong. And it happens at a pace that is psychologically disorienting.

Unlearning

AI does not just accelerate learning. It accelerates unlearning. The painful, necessary process of discovering that things you thought you knew were incomplete, outdated, or wrong.

In traditional learning, you discover gaps gradually. A conference talk reveals a technique. A code review exposes a missed pattern. A production bug teaches about an overlooked edge case. These moments are spaced out over months or years, giving time to integrate new understanding with existing knowledge.

AI collaboration compresses this dramatically. In a single afternoon working with Claude on a Neo4j integration for my blog, I discovered that my understanding of graph database indexing was superficial, my approach to Cypher query optimization was inefficient, and my mental model of relationship traversal performance was based on relational database assumptions that simply did not apply.

Not just learning new information. The uncomfortable realization that existing expertise was built on shaky foundations. The AI did not just fill gaps. It revealed gaps I did not know existed.

Traditional expertise brings confidence. You know what you know, and you know what you do not know. AI-assisted learning reveals a third category: things you thought you knew but did not. This category grows faster than your traditional knowledge. Epistemic vertigo.

The Mirror

AI serves as an unexpected mirror for your own thinking. Explain problems to an AI, provide context, clarify requirements, evaluate and refine suggestions, and you are forced to examine your mental models with unusual clarity.

Traditional programming is often intuitive. You “know” the right approach without fully articulating why. Follow patterns that feel correct. Make architectural decisions based on experience. This works, but it makes your knowledge tacit.

AI collaboration makes the tacit explicit. To get good results, you have to articulate not just what you want but why. Explain constraints you usually take for granted. Surface assumptions that normally remain buried.

Through months of AI conversations I discovered I have a strong bias toward stateless architectures, consistently underestimate the importance of error handling in initial implementations, and tend to optimize for developer experience over runtime performance. These insights were not available through traditional self-reflection or even through working with human colleagues. The AI’s need for explicit context forced a level of self-examination that normal programming work does not require.

Distributed Expertise

I no longer think of myself as having expertise in AWS CDK. I have expertise in the collaborative process of using AI to solve AWS infrastructure problems. My knowledge includes not just CDK patterns and best practices, but which types of questions work well with AI assistance, how to structure context for complex deployment scenarios, when to trust AI-generated CloudFormation versus when to verify manually.

Not just what you know, but how effectively you orchestrate the combination of human insight and AI capability. Not having answers, but knowing how to ask questions that generate better answers from the human-AI system.

Are you an expert, or are you good at working with an expert system? Does the distinction matter if the outcomes are superior?

Collaborative Creation

Something happens in the space between human knowledge and AI capability. New knowledge gets created that neither party possessed independently. Not information synthesis. Genuine knowledge creation through the collision of different types of intelligence.

You describe a problem in your domain. The AI recognizes patterns from entirely different fields. A connection you never would have made, not because you are not smart enough, but because the domains were too distant in your mental map. Neither party had the complete idea independently. It was created in the collaborative space between human context and AI pattern recognition. No clear lineage. The ideas emerge from the interaction itself.

Many of the best solutions emerge from this intersection. Human domain knowledge and AI pattern recognition. Human intuition and artificial analysis. The creative tension between them. Once you recognize this, you stop just seeking answers and start exploring the emergent space where new ideas form.

Infinite Information

When you can get detailed explanations of any technical concept, comprehensive code examples for any pattern, thorough analysis of any architectural decision, the bottleneck shifts from access to the ability to evaluate and integrate meaningfully.

Building the Python ETL pipeline, I could ask Sonnet 4 about any aspect of data transformation. Within minutes, more information about ETL best practices than I could absorb in weeks. But having all this information did not automatically make me better. Sometimes it made decision-making harder. Twelve different approaches to data validation. How do you choose for your specific context?

The critical skill is not information gathering. It is information filtering. Asking the right questions, recognizing relevant patterns, synthesizing scattered insights into actionable understanding. The AI provides the information. The human provides judgment about what matters.

Temporal Collapse

Learning that would unfold over months gets compressed into hours. This compression is not just about speed. It changes how understanding develops.

Traditional learning has a pattern. Exposure, confusion, gradual understanding, practice, mastery. Each phase takes time. Concepts settle. Connections form. Understanding deepens through experience.

AI can short-circuit this. You go from knowing nothing about a technology to implementing complex solutions in a single session. But the understanding might be functional and shallow. Within two hours of working with Claude, I had a complete Neo4j integration with sophisticated queries. When a colleague asked me to explain why I chose certain relationship modeling approaches, I realized my understanding was largely procedural. I knew what to do. Not always why.

New categories of knowledge emerge. Functional understanding that enables implementation without deep comprehension. Pattern recognition that works in similar contexts but does not transfer. Theoretical knowledge that covers details but lacks experiential grounding. The challenge is converting compressed learning into genuine understanding.

Questions Over Answers

Traditional learning starts with questions and seeks answers. AI inverts this. Often the most valuable learning comes not from answers to your questions, but from questions you would not have thought to ask.

Working on authentication patterns, I asked about implementing OAuth2 flows. Expected straightforward guidance. Instead the AI started questioning my assumptions. “Are you handling token refresh properly for long-lived sessions? Have you considered the implications of token storage in different browser environments? What is your strategy for graceful degradation when auth services are unavailable?”

These were crucial questions I had not thought to ask. Blind spots revealed. Edge cases that would have been discovered painfully in production. Guided toward more sophisticated solutions.

Instead of seeking answers, you seek better questions. Instead of filling known knowledge gaps, you discover unknown ones. The AI becomes a generator of inquiry. This requires a different kind of intellectual humility. Not just admitting what you do not know, but being open to discovering that you do not know what you do not know.

Cognitive Empathy

Over time, AI develops what feels like cognitive empathy. An understanding of how you process information, what explanations resonate, what level of detail you need. Early interactions feel generic. Gradually, the AI adapts to match your needs. Concrete examples using technologies you work with. New patterns related to ones you already understand. Analysis structured to match your diagnostic thinking patterns.

The AI is learning not just what you know, but how you learn. Developing a model of your cognitive preferences and using it to optimize knowledge transfer. When an AI adapts explanations to your style, are you learning the subject matter or learning the AI’s model of it filtered through its model of you? The boundary blurs.

Meta-Learning

Perhaps the most profound change is meta-learning. Learning how to learn more effectively. Each AI interaction teaches something about the subject matter, but also about how to collaborate with AI more effectively. Which types of questions generate the most useful responses. How to provide context that leads to better solutions. When to trust versus verify.

Working with AI exposes you to such variety that you start recognizing abstract patterns transcending specific technologies. Better intuition for elegant solutions, maintainable architectures, worthwhile trade-offs. After months of AI-assisted development, I found myself better at code reviews, architecture discussions, and mentoring. The collaboration improved not just my ability to work with AI, but my ability to think about technical problems generally.

Wisdom

As information becomes unlimited and analysis instant, the scarcity shifts to wisdom. The ability to know what questions are worth asking, what problems are worth solving, what knowledge is worth pursuing.

Building my blog’s analytics system, I could ask AI about dozens of metrics, analysis techniques, visualization approaches. The AI could explain the technical implementation of anything I imagined. But it could not tell me which metrics would actually be meaningful for my goals, which analyses would provide actionable insights, how much complexity was worth the analytical capability.

Those decisions required understanding not just technical possibilities but human context. What I was trying to achieve. What my constraints were. What would actually improve my writing and reader engagement. The AI provides the how. I provide the why.

The most valuable human skill in an AI-augmented world is not technical knowledge. It is the judgment to know what is worth knowing, what problems are worth solving, what trade-offs are worth making. These are inherently human questions.

The Future of Knowing

What does it mean to “know” something when AI can provide expert-level information about any topic instantly? When implementation happens at the speed of thought? The skills that matter shift from knowledge retention to knowledge navigation. From individual expertise to collaborative intelligence. From having answers to asking better questions.

But this shift preserves and amplifies uniquely human capabilities. The ability to recognize what matters. To make value judgments. To understand the human context that gives meaning to technical solutions.

What becomes of learning when intelligence itself becomes fluid, distributed, collaborative? What becomes of expertise when knowledge becomes infinite and analysis becomes instant?

These questions do not have answers yet. Exploring them is the real work.

Sources and Further Reading

The opening quote from Alan J. Perlis reflects the theme of tools as cognitive amplifiers, drawing from his influential work on programming language design and his famous epigrams about computation and human thinking.

The concept of “unlearning acceleration” builds on Thomas Kuhn’s work on scientific revolutions and paradigm shifts, though applied to individual learning experiences rather than scientific communities.

The discussion of distributed expertise references Edwin Hutchins’ work on “Cognition in the Wild,” which examines how knowledge and problem-solving can be distributed across people and tools, extended here to human-AI partnerships.

Epistemic philosophy underlying these discussions draws from Karl Popper’s work on the growth of knowledge and the falsification of theories, as well as Donald Schön’s concept of reflective practice in professional knowledge.

The temporal aspects of learning discussed here connect to research on expertise development, including the work of K. Anders Ericsson on deliberate practice, though reconsidered in the context of AI-accelerated learning cycles.

Previous Chapter: Chapter 11: The Social Machine

Next Chapter: Chapter 13: The Value Equation