Building a Language Engine You Can Actually Own

Velocidad-AI is an open-source Spanish learning engine licensed under GPL-3.0 with twenty reference files including a Cognate Accelerator that unlocks thousands of words, a military-style Field Manual for daily practice, five real-world scenario ladders, six agent prompts, two hundred cloze exercises, and fifteen memory techniques. The reference content is CC BY-SA 4.0, fork it, adapt it, share it. No app, no account, no subscription.

The methodology behind Velocidad is simple: speak first, log what breaks, drill the friction, speak again. But the loop needs material. Prompts to drive the roleplay. Scenario ladders that match real situations. A reference library you can pull up standing in line at a restaurant.

The whole engine is on GitHub, free to fork, free to browse. The engine code is GPL-3.0, open source, derivatives stay open. The reference content (scenarios, vocabulary, methods) is Creative Commons BY-SA 4.0, fork it, adapt it, share it with attribution. No app, no account, no subscription. Just markdown files that load on your phone and a set of agent prompts you paste into any AI chat.

The Reference Library

Twenty files organized into four sections. System, Reference, Methods, and Field Guides.

The System section is where you start. Five files covering the structural core of Spanish. The sound system with its five invariant vowels and a consonant threat matrix that flags every letter that doesn't behave like English. The sentence engine that shows how SVO assembly actually works. The verb system broken into three families with the thirteen irregulars you can't avoid. Connectors that glue clauses together. And pattern templates you can slot words into like sentence-level Mad Libs.

The first file in that section carries the most weight.

The Operating System

It's called The Operating System, and it covers the 300 structural words that ARE the language. Not topic words. Not food vocabulary or color names. The infrastructure words that appear in every sentence regardless of subject.

You can't follow spoken Spanish yet because you know the content words but not the bolts and brackets between them. You hear the nouns. You miss the eight words connecting them. You catch "mañana" and "ella" but lose the entire sentence around them.

This file organizes those 300 words by function, not alphabet. Ten word classes that build every sentence: subject pronouns, object pronouns (the tiny syllables before verbs your ear hasn't trained to catch yet), negators, verb forms, prepositions, question words, connectors, time markers, quantity markers, existence markers. Each class gets a clear explanation of what job it does in a sentence and why English speakers specifically miss it.

Master those ten classes and you can parse any Spanish sentence even when you don't know every content word. You'll hear the structure even when the vocabulary is new.

The Cognate Accelerator

This one earns its own section. Eighteen suffix transformation rules that let you convert thousands of English words into correct Spanish on the fly.

English borrowed massively from Latin through French. Spanish came from Latin directly. The words are cousins. And the suffix patterns are systematic.

Words ending in -tion become -cion. Communication becomes comunicacion. Words ending in -ty become -dad. University becomes universidad. Words ending in -ous become -oso. Dangerous becomes peligroso. And on it goes: -ment to -mento, -ance to -ancia, -ence to -encia, -ble stays -ble, -al stays -al. Eighteen rules total. Each one unlocks dozens to hundreds of words.

The file includes tables for every rule with examples, success rates, and the false friends that break the pattern. Because there are always false friends. But eighteen rules and a short exception list gives you a working recognition vocabulary of thousands of Spanish words. The document calls it the single highest-ROI hour you'll spend on the language. That tracks.

The Mnemonic Dictionary

A hundred and sixty-five hard-to-remember Spanish words, each paired with a keyword mnemonic and a vivid mental image.

Take madrugada (early morning, dawn). The mnemonic: "The MADRE (mother) always gets up at the MADRUGADA." The image: your mom in the dark kitchen, making coffee before anyone else is awake.

Organized by category. Survival words, daily life, descriptions, emotions, time, places. Each entry has the word, the translation, a keyword that sounds like it, a vivid picture to lock it in memory, and an example sentence. The images are deliberately weird, physical, and sticky. That's what makes them work. This isn't a dictionary. It's a memory hack disguised as a word list.

The AI Reverse Engineering Handbook

The file opens with: "You don't learn a language. You reverse engineer a communication system." Then it does exactly that across fourteen sections.

A seven-layer language stack that treats Spanish the way a network engineer treats a protocol. An English-to-Spanish interference map showing exactly where your native language will sabotage you. Error archaeology that treats your mistakes as diagnostic data rather than failures. Compression theory that explains fluency as the increasing compression of conscious processing into automatic chunks. A frequency-weighted ROI engine that calculates which words and structures give you the most communicative power per hour invested.

If you learn by understanding systems rather than memorizing pieces, this file reframes the entire project. It doesn't teach you Spanish. It gives you the architecture of Spanish so you can teach yourself.

The Field Manual

Imagine a military field manual, but for Spanish. Eleven sections plus three appendices, formatted like an operations document, designed for daily use.

Phonetic weapons system with mouth position charts and drill protocols. Immediate action drills for common scenarios. A verb operations center. Sentence assembly manual. Pronoun targeting system. Preposition field guide. Temporal operations for past and future tenses. Advanced clause architecture. Field deployment scenarios. Listening intercept protocols. And a daily training protocol that ties it all together.

The appendices include verb conjugation tables, the hundred highest-value vocabulary words, and a survival card you could print and carry in your pocket.

Open it every day. Work one section. Mark your position. Come back tomorrow. It's the least glamorous document in the repo and probably the most useful for daily practice.

The Methods

Four files that cover how to practice, not what to practice.

Rapid Acquisition Methods documents eighteen learning techniques across six vectors: production-first, input-first, memory systems, active recall, embodied learning, and meta-cognitive. Each method includes the science behind why it works, practical steps, and notes on how it fits the rest of the system. TPRS, the Output Hypothesis, shadowing protocols, interleaving practice. Eighteen methods with citations.

The Cloze Method file explains the most effective flashcard format for language learning and then gives you two hundred exercises across six difficulty tiers. A cloze is a sentence with a gap. Your brain produces the missing word from context, building grammar and vocabulary simultaneously. Five cloze types (vocabulary, verb form, function word, phrase, and transformation), six tiers, two hundred exercises ready to go.

And there's a memory techniques system with fifteen protocols. Spaced retrieval, memory palace, chunking chains, backward buildout, phonetic anchoring, emotional encoding, motor pattern drilling, interleaving, story chains, minimal pair drilling, a five-minute daily blitz, sleep consolidation, visual encoding, and production under pressure drills. Each one documented with steps, examples, and a daily routine that fits into twenty-five minutes.

The Rest of the Reference Shelf

A priority learning order that maps an eight-week path from survival to conversation. Week by week. What to master when. Which world to practice in. How many words to add at each stage.

A visual quick reference with structured lookup tables for articles, pronouns, verb endings, prepositions. The thing you pull up when you're mid-sentence and need to check whether it's "el" or "la."

A repair system with the eight phrases that save you when you freeze mid-conversation. The escape hatches.

And for multilingual learners: a German-Spanish bridges file that maps concepts you already know (gendered articles, formal vs informal address, verb conjugation) directly onto Spanish equivalents. Two genders instead of three. No case system. Fixed word order. Phonetic spelling. If you already survived German grammar, Spanish is a relief.

Five Worlds

The reference library is what you study. The worlds are where you practice.

Each world is a real place with real people. McDonald's is the safe lab: warm crew, low stakes, you already go two or three times a week. Casa is for house workers who come through for projects. Vecinos is the neighbor family where you're building a friendship across the language gap. Familia is heritage calls with family. Errands covers restaurants, shops, and every counter you hit during the week.

Every world has a five-level ladder.

Level one is survival. Twenty to thirty seconds. Order, pay, leave. Level two adds clarification: ask them to repeat, ask if they have something, request something slower. Level three is small talk. Compliment someone. Ask how their day is going. Level four is real conversation beyond the transaction. Level five is relationship. Jokes, interruptions, stories, being yourself in another language.

One world per week. You don't move to level three until level two feels eighty percent automatic. The scenarios include the exact phrases you'd say, the responses you'd hear, and the recovery moves for when you freeze.

Six Prompts, One Cycle

The reference library and the worlds are raw material. The agent prompts turn them into practice sessions.

Six prompts. The session runner drives roleplay in a specific world and level. The distiller extracts friction from your transcript afterward. The SRS generator turns your failures into drill cards. The session planner maps tomorrow's session based on what broke today. The meta-observer runs a weekly review of how you learn and adjusts the system itself. And the master prompt combines everything into a single daily engine.

Every prompt enforces fifteen rules of immersion. Spanish first. Comprehensible input, slightly above current level. The learner produces speech, not just listens. Only fix the two or three highest-leverage errors per session. Drill the template, not the vocabulary. Always end with a win. Every scenario practiced must be deployable within forty-eight hours with real people.

The anti-pattern list matters as much as the rules. No grammar lectures. No vocabulary lists. No praise for effort, only for specific improvement. No simulated lessons. Real scenarios only. And the biggest one: if more than ten minutes pass without the learner speaking Spanish, something is wrong.

How I Actually Use It

I never run the system the same way twice.

I open agent mode. I paste the master prompt or the session runner. The agent has the full repo as context, all twenty reference files, all five worlds, the rules of immersion, the meta-learning methodology. And it has whatever private context I feed it: my learner profile, my friction logs from last session, my SRS card state, notes about what's been clicking and what hasn't.

Then we just go. The agent picks up where I left off. It knows I've been stuck on object pronouns because the friction logs say so. It knows McDonald's level two is automatic now but vecinos level one still freezes me up. It reads my learning observations and adjusts. Maybe today it throws in an unexpected question mid-order to test my repair phrases. Maybe it notices I've been avoiding past tense and engineers a scenario that forces it.

The system never solidifies into a fixed curriculum. The repo is the raw material. The agent is the cook. My friction data is the ingredient list. Every session assembles something slightly different from those pieces based on where I actually am, not where a lesson plan thinks I should be.

And the repo itself evolves from this. When a session reveals that the repair system needs a ninth phrase, I add it. When the cognate accelerator is missing a suffix pattern that came up in practice, it goes in. When a new world emerges (the barbershop, the mechanic, the parent-teacher conference), it gets a scenario file. The reference library isn't a finished product. It's a living document that grows from real use.

That's the part you can't get from a static app. The engine, the agent, your private context, and your brain form a feedback loop. The repo gets better because you use it. Your sessions get better because the repo improved. The agent gets smarter about you because the friction data accumulates. None of these pieces works as well alone. Together they compound.

Friction, Not Flashcards

Most language tools drill vocabulary. This system drills failures.

After every session, the friction log captures five types of breakdown. Production gaps: things you tried to say but couldn't. Comprehension gaps: phrases you didn't understand. Recurring errors: patterns of mistakes that keep showing up. Pronunciation targets: sounds causing confusion. Avoidance patterns: the structures you're dodging because they feel hard.

Those friction logs feed a four-box Leitner SRS. Box one gets reviewed daily. Box two every other day. Box three every third day. Box four weekly. Hit it twice in a row, it advances. Miss it, back to box one. The cards aren't vocabulary words. They're the exact phrases you tried to produce under real pressure and couldn't. That's the difference.

The Architecture

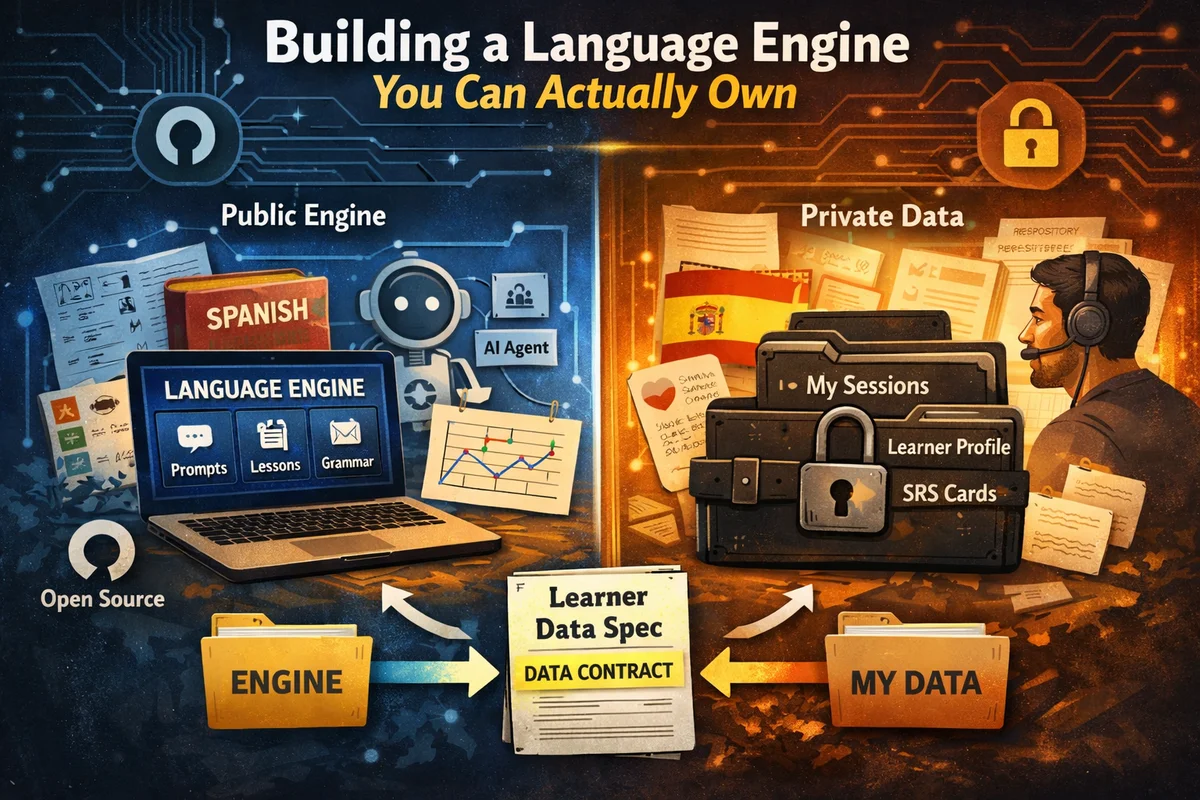

The engine and your personal data live in separate places.

Everything described above is the public repo. Prompts, worlds, the reference library, methods, memory techniques, config templates. It contains nothing about any specific learner. The engine is GPL-3.0 (open source, share derivatives), the reference content is CC BY-SA 4.0 (fork it, adapt it, credit the source). Fork it, adapt it, build your own version for Mandarin or Portuguese.

Your personal data (session transcripts, friction logs, SRS card state, mastery tracking) lives in your own space. A short spec document in the repo's docs folder tells you what files to create and what format they take. Read it in ten minutes. Set it up once.

If all you want is the reference library on your phone, clone the repo and start reading. If you want the full system with agent mode and friction logging, the spec shows you how. Both paths work.

What Compounds

The engine improves over time. Better prompts. Richer scenario ladders. Deeper reference material. Anyone using the system can contribute back.

But the personal side is what I keep thinking about. Every session generates data. Friction logs, transcripts, SRS progressions, observations about how you specifically learn. That record compounds. Come back after a gap and every session is still there. The friction log from your first McDonald's order. The debrief where something clicked. That's yours. No company sunset, no subscription lapse, no server migration can touch it.

That's what owning a system means. Not the engine. The engine is free. Owning the record of your own work.

Velocidad is open source under GPL-3.0. The reference content, scenarios, vocabulary, memory techniques, is CC BY-SA 4.0: fork it, adapt it, share it. The Learner Data Spec shows you how to set up your private data side in ten minutes. No app. No account. Just files.